LEADTOOLS and Windows Media Foundation

What is Windows Media Foundation

Media Session and TopologyConnecting Media Foundation Components

Where does the LEADTOOLS Media Foundation SDK Fit In?

Microsoft Windows Media Foundation Interfaces

Microsoft Windows Media Foundation Extensions

Table 1: Media Types Supported by the LEAD MKV Media Sink

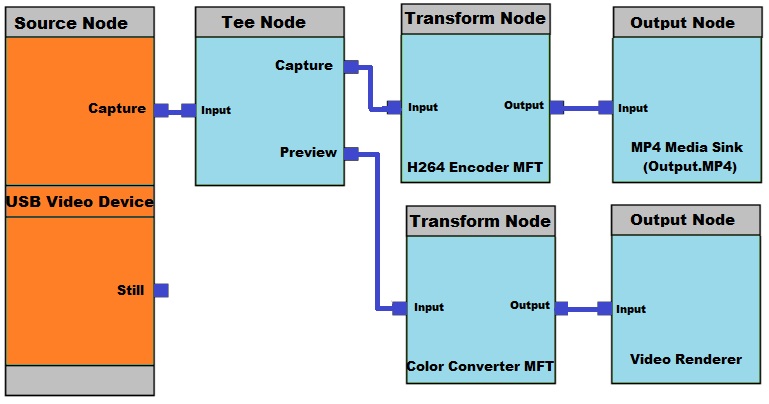

Figure 1: A simple capture topology

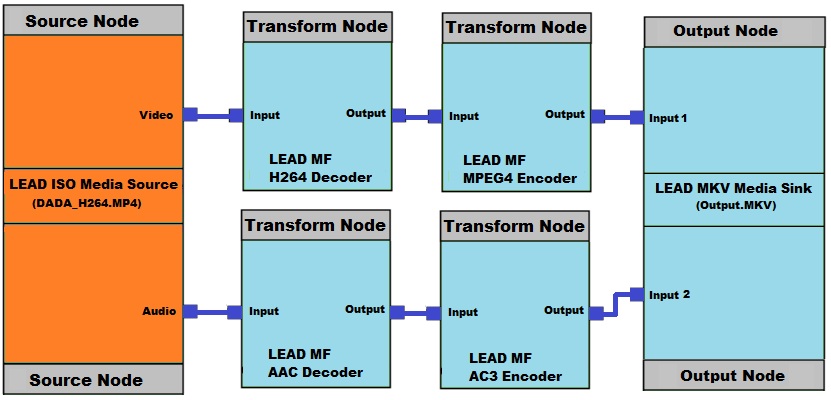

Figure 2: A simple conversion topology

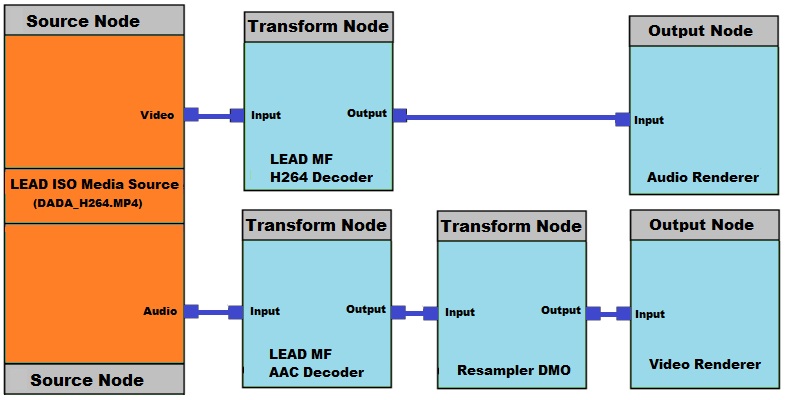

Figure 3: A simple playback topology

What is Windows Media Foundation®

Microsoft Windows Media Foundation is the next generation multimedia platform for Windows. It enables both developers and users to handle and process media content with enhanced robustness, unparalleled quality, and seamless interoperability. Its framework pipeline and infrastructure platform is based on the Microsoft Windows Component Object Model (COM), and provides a common interface for media across many of Microsoft's programming languages. It can render or record media files on-demand by either user or developer. Windows Media Foundation offers high audio and video playback quality, high-definition content (HDTV), content protection and a more unified approach for digital data access control for digital rights management (DRM) and its interoperability. It integrates DXVA 2.0 for offloading more of the video processing pipeline to hardware, for better performance.

Microsoft produced the DirectShow® multimedia framework and API to replace the Video for Windows technology (VFW), enabling software developers to perform various operations on media files. DirectShow® development tools and documentation are distributed as part of the Microsoft Platform SDK. Eventually, Microsoft plans to replace DirectShow® with Windows Media Foundation (WMF), beginning with Windows Vista.

Components and Topology

The Microsoft Media Foundation® pipeline divides multimedia task processing (such as video playback), into a set of steps. Each step or stage in the processing of the data is performed by one of the Media Foundation components. The main components are as follows: Media Source, Media Sink and Media Foundation Transforms (MFT). The connections between each component and stream data flow are determined by the topology.

The Windows Media Foundation® architecture uses a Topology object to represent how data flows through the pipeline and to describe the path that each stream takes. Each Media foundation component in the pipeline (media sources, transforms, and media sinks) is represented in the topology as a node. Developers can add custom effects or other transforms at any stage in the topology by adding a node representing their component, then rendering the results to a file, URL, or camera.

Component Definitions

Since the entire concept of rendering, converting, and capturing files in Windows Media Foundation is based on components and topologies, it is important to understand the role of each one.

Media Source. The media source is responsible for reading and splitting the media streams. It is usually the first component (by data flow) in the topology. The data can come from a file on disk, a network, a hardware device, or any other method. Each media source contains one or more streams, and each stream delivers data of one type, such as audio or video.

Video/Audio Decoder. Video and audio decoders are Media Foundation Transform (MFT) components that handle the actual decoding or decompression of the data. They do not parse data, so data should be split before it is passed to the decoder. For this reason they are usually connected to the media source output. For example, the video decoder input might be a compressed video stream such as MPEG2, and the output could be raw video data.

Renderer. Renderers are Media Sinks that present data for playback. Data can be audio, video, or both. For example, when playing a media file with both audio and video, a video renderer would handle displaying the video on the screen, and an audio renderer would handle directing the audio data to the sound device. The input of the renderer is usually uncompressed data coming from the decoder.

Audio/Video Encoder. Audio and video encoders are Media Foundation Transform (MFT) components that compress data, audio, or video. The input is usually uncompressed audio or video data, and the output is the compressed version of the same data.

Media Sink. Media Sinks join media streams and handle writing the data to disk to create a media file. It can also display content on the screen or a sound device like a Renderer does. The media sink is usually the last component (by data flowi) in the topology. Input is usually compressed data from an audio/video encoder. The output is a single stream containing both video and audio data.

Video/Audio Processor (Transform). Video and audio processors are Media Foundation Transform (MFT) components that are used to perform some type of data processing or generate some type of event. LEAD has created many video and audio processing transforms, such as the Video Resize Transform, used to resize a video stream. Usually, these transforms can only handle uncompressed data, so they would be inserted in the topology before the encoder or after the decoder.

Media Session and Topology

Microsoft Media Foundation® provides the Media Session object to set up the topologies and control the data flow. It holds the partial topologies to finally create a full topology by using the Topology loader. The Topology Loader is a media foundation object that creates the necessary connections between components. Creating the necessary connections between components is called "Resolving the Topology".

Topologies within a media session are widely used in video playback, in which the media foundation components (Media Source, Media Sink, and MFTs) provide steps such as file parsing, video and audio de-multiplexing (splitting), decompressing, and rendering. They are also used for video and audio recording, transcoding, and editing. During rendering (resolving the topology), the Topology Loader searches the Windows Registry for registered Media Foundation components. It builds the complete topology, connects the components together, and, (at the developer's request), plays, pauses, etc. based on the created topology. TopoEdit, a free utility that ships with the Windows SDK, can be used to build and resolve topologies and test media sources, media sinks, and transforms.

Connecting Media Foundation Components

The data-processing components in the pipeline (media sources, transforms, and media sinks) are represented in a topology as nodes. The flow of data from one component to another is represented by a connection between the nodes. There are four types of topology nodes:

Source Node. A source node acts like a media stream from a media source.

Transform Node. A transform node acts like a Media Foundation Transform (MFT).

Output Node. An output node acts as a stream sink to a media sink.

Tee Node. A tee node is not a Media Foundation pipeline component, but is used to direct the data flow by acting as a fork for a stream.

Each component in a topology handles a specific task, and each one is usually designed to handle a specific type of data or stream.

For example, to create an MKV file with H264 compressed video, use an H264 Encoder and an MKV Media Sink. Most likely, the H264 Encoder will only create H264 compressed data and the MKV Media Sink will accept H264 video as one of its supported video compressions. Certain types of audio can also be accepted as inputs. The same holds true for decoding and demultiplexing. An H264 Decoder will only decode H264 video, and an MKV Media Source will only accept as input those streams containing H264 video and certain types of audio.

When you try to resolve the topology and connect transforms that do not agree on data types, the connection is usually refused and the media session will not run. This is why it is important to know which media types each media foundation component supports.

For example, Table 1: Media Types Supported by the LEAD MKV Media Sink, lists media types supported by the LEAD MKV Media Sink. If you attempt to connect any media type other than those listed to the input of the LEAD MKV Media Sink, it will refuse the connection.

Media Types Supported by LEAD MKV Media Sink

| Media Type | Video | Audio |

|---|---|---|

| Type: | MEDIATYPE_Video | MEDIATYPE_Audio |

| Subtypes: | MEDIASUBTYPE_VP8 | MEDIASUBTYPE_Vorbis |

| MEDIASUBTYPE_MPEG2_VIDEO | MEDIASUBTYPE_AC3 | |

| MEDIASUBTYPE_LISO | MEDIASUBTYPE_MPEG1Audio | |

| MEDIASUBTYPE_LMPG2 | MEDIASUBTYPE_PCM | |

| MEDIASUBTYPE_H264 |

Some Common Topologies

The following figures illustrate basic topologies for capture, conversion, and playback:

Figure 1: A Simple Capture Graph

Figure 2: A Simple Conversion Graph

Figure 3: A Simple Playback Graph

Capture and Control

Microsoft Windows Media Foundation® also has many interfaces and attributes which allow for capturing from and controlling many types of webcams, TV tuners, and other devices. Options include controlling the TV tuner, and setting many common device properties such as capture size, color space, and frame rate.

However, actual control over the device is limited by what the manufacturer of that device has exposed, and what has been implemented in the device itself. For example, one device may support capturing at 3 different resolutions, while another supports 10. One device may allow you to change the frame rate, while another may not.

Where does the LEADTOOLS Multimedia SDK Fit In?

The many advantages of Microsoft Windows Media Foundation®: connecting transforms programmatically, creating custom media sources, media sinks and transforms, and so forth, can be quite complex—and programmers often complain about this complexity.

Microsoft Windows Media Foundation® Interfaces

LEAD's solution—the LEADTOOLS Media Foundation SDK—handles these issues "under the hood," and exposes this functionality to the developer through dozens of easy-to-use interfaces.

Three of these interfaces handle the most common tasks: ltmfCapture, ltmfPlay, and ltmfConvert. They simplify processes that use Windows Media Foundation ® such as connecting the correct transforms in the correct order, enumerating capture devices for capture, performing media playback, and many others

Microsoft Windows Media Foundation ® Extensions

By default, Microsoft Windows Media Foundation® supports several common media file formats, such as MP4, MP3, Windows Media Video, and plain static images. But it is also completely extensible, and extensions allow it to support any container format available —including any audio or video codec.

LEAD has created many of these extensions, which are included with the LEADTOOLS Media Foundation SDK. A current list of available encoders, decoders, Media sinks / sources, and transforms is at LEADTOOLS Media Foundation Transforms (https://www.leadtools.com/sdk/multimedia/media-foundation).

References

MSDN Microsoft Media Foundation documentation. Retrieved on December 20, 2012.

Wikipedia Media Foundation entry. Retrieved on December 20, 2012

LEADTOOLS Media Foundation API WebHelp

LEADTOOLS Media Foundation Transforms